What is a Data Model? Types, Examples, and Best Practices

Quick Answer

A data model is an abstract representation that defines how data elements relate to each other and to real-world entities, specifying structure, relationships, constraints, and semantics. Data models provide blueprints for database design, ensuring data is organized logically and consistently to support business requirements and analytical needs.

A data model is an abstract representation that defines how data elements relate to each other and to real-world entities, specifying structure, relationships, constraints, and semantics. Data models provide blueprints for database design, ensuring data is organized logically and consistently to support business requirements and analytical needs.

Data models serve as the foundation for effective data management by providing structured frameworks that define how information should be organized, stored, and accessed. A well-designed data model reduces redundancy, ensures consistency, and enables efficient queries that support both operational processes and analytical insights. Data models are essential for data warehouses, data lakes, and business intelligence systems that need structured data access.

What is a Data Model?

A data model is a conceptual framework that defines the structure, relationships, and rules governing data within a system. It serves as a blueprint specifying which data elements exist, how they relate to each other, what constraints apply, and what operations are valid, bridging the gap between abstract business concepts and concrete database implementations.

Data models exist at multiple levels of abstraction, from high-level conceptual models that describe business entities and relationships in business terms, through logical models that define detailed structure independently of technology, to physical models that specify exactly how data will be stored in specific database systems.

Core Components

Entities: Objects or concepts about which data is collected, such as customers, products, orders, or transactions.

Attributes: Properties or characteristics of entities, such as customer name, product price, or order date.

Relationships: Associations between entities, such as customers placing orders or orders containing products.

Constraints: Rules governing valid data values and relationships, such as required fields, unique identifiers, or referential integrity.

Keys: Attributes that uniquely identify entity instances, enabling relationships and ensuring data integrity.

Levels of Data Modeling

Conceptual Data Model

High-level representation focused on business concepts:

Purpose: Communicate data requirements between business stakeholders and technical teams without implementation details.

Content: Major entities, relationships, and business rules expressed in business terminology.

Audience: Business analysts, executives, and non-technical stakeholders who need to understand data structure without technical complexity.

Characteristics: Technology-agnostic, emphasizes completeness over implementation detail, uses business names for entities and relationships.

Notation: Often represented through entity-relationship diagrams (ERDs) using simple rectangles for entities and lines for relationships.

Logical Data Model

Detailed specification independent of database technology:

Purpose: Define precise data structure including all entities, attributes, relationships, and constraints without committing to specific database platform.

Content: All entities with complete attribute lists, data types at logical level, relationship cardinality, primary and foreign keys, and normalization.

Audience: Data architects, database administrators, and developers who will implement physical models.

Characteristics: Technology-independent but detailed, includes all business rules expressible through structure, provides foundation for physical implementation.

Normalization: Typically normalized to third normal form (3NF) to eliminate redundancy and ensure consistency.

Physical Data Model

Implementation-specific database design:

Purpose: Specify exactly how logical model will be implemented in specific database platform, optimized for performance.

Content: Tables, columns with database-specific data types, indexes, partitions, constraints, stored procedures, and physical storage parameters.

Audience: Database administrators and developers implementing and maintaining the database.

Characteristics: Platform-specific, includes performance optimizations like denormalization, considers storage and query patterns, specifies physical organization.

Optimization: May diverge from logical model through denormalization, redundancy, or pre-aggregation to improve query performance.

Data Modeling Approaches

Relational Modeling

Traditional approach based on relational theory:

Foundation: Organize data into tables (relations) with rows (tuples) and columns (attributes), related through shared key values.

Normalization: Structure data to eliminate redundancy through normal forms, reducing update anomalies and ensuring consistency.

Relationships: Connect tables through primary keys and foreign keys, enabling complex queries through joins.

Use Cases: Transactional systems (OLTP), applications requiring strong consistency and integrity, scenarios where data relationships are complex.

Strengths: Proven reliability, strong consistency guarantees, mature tools and expertise, clear theoretical foundation.

Dimensional Modeling

Analytical approach optimized for business intelligence:

Foundation: Organize data around business processes using fact tables for measurements and dimension tables for context.

Star Schema: Simple design with central fact table directly connected to dimension tables, optimized for query performance.

Snowflake Schema: Normalized variation where dimensions connect to subdimensions, reducing redundancy at cost of additional joins.

Use Cases: Data warehouses, business intelligence systems, analytical databases, reporting and dashboards.

Strengths: Intuitive for business users, optimized for analytical queries, efficient aggregations, supports drill-down analysis.

Document Modeling

Flexible approach for semi-structured data:

Foundation: Store data as self-contained documents (JSON, XML) that can have varying structure, enabling schema flexibility.

Structure: Documents contain both data and structure, allowing different documents in same collection to have different fields.

Relationships: Often denormalized with embedded documents rather than references, optimizing for document-centric access patterns.

Use Cases: Content management, user profiles, product catalogs, applications with evolving data requirements.

Strengths: Schema flexibility, natural representation of hierarchical data, aligns with object-oriented programming.

Graph Modeling

Relationship-focused approach:

Foundation: Represent data as nodes (entities) and edges (relationships), optimizing for relationship traversal and network analysis.

Structure: Nodes contain properties, edges define typed relationships with properties, enabling complex relationship queries.

Query Patterns: Designed for relationship queries like "find connections" or "shortest path" that are difficult in relational models.

Use Cases: Social networks, recommendation engines, fraud detection, knowledge graphs, network analysis.

Strengths: Natural representation of connected data, efficient relationship queries, flexible schema evolution.

Data Modeling for Analytics

Star Schema Design

Most common analytical model structure:

Fact Tables: Contain measurements and metrics from business processes, with foreign keys to dimension tables. Grain defines level of detail, such as individual transactions or daily summaries.

Dimension Tables: Contain descriptive attributes providing context for facts. Typically denormalized for query simplicity.

Benefits: Simple to understand, excellent query performance, supports dimensional analysis tools, straightforward ETL processes.

Design Considerations: Choose appropriate fact grain, identify all relevant dimensions, handle slowly changing dimensions, optimize for common query patterns.

Snowflake Schema Design

Normalized variation of star schema:

Structure: Dimension tables normalized into subdimensions, reducing data redundancy through multiple dimension table levels.

Benefits: Reduced storage requirements, clearer dimensional hierarchies, easier to maintain dimension consistency.

Trade-offs: Increased query complexity through additional joins, potentially slower query performance, more complex ETL processing.

When to Use: Storage costs are significant concern, dimensions have clear hierarchies with significant redundancy, dimensional integrity is critical.

Data Vault Modeling

Approach designed for enterprise data warehousing:

Components: Hubs for business keys, links for relationships, satellites for descriptive attributes and history.

Benefits: Highly auditable with complete history, flexible for changing requirements, parallel loading capabilities.

Complexity: More complex than dimensional modeling, requires specialized expertise, additional layers between source and analytics.

When to Use: Extensive regulatory requirements, highly volatile source systems, need for complete auditability and history.

Data Modeling Best Practices

Understand Business Requirements

Model data to support business processes and analytical needs:

Engage stakeholders to understand entities, relationships, and rules that govern business operations. Ensure model reflects how business conceptualizes data rather than imposing technical structures.

Choose Appropriate Abstraction Level

Match modeling detail to purpose:

Conceptual models for business communication require less detail than physical models for implementation. Invest effort appropriate to each level's objectives.

Maintain Consistency

Use standard naming conventions and patterns:

Establish conventions for entity names, attribute names, and relationship naming. Consistency improves comprehension and maintainability across development teams.

Document Thoroughly

Maintain clear documentation of model decisions:

Document entity definitions, attribute meanings, relationship semantics, business rules, and design rationale. This documentation becomes essential for future maintenance and enhancement.

Consider Performance

Balance normalization with performance requirements:

While normalization reduces redundancy, analytical systems often benefit from strategic denormalization. Understand query patterns and optimize accordingly.

Plan for Change

Design models that accommodate evolution:

Business requirements change over time. Design with flexibility for new attributes, entities, and relationships without requiring fundamental restructuring.

Validate with Stakeholders

Confirm models match business understanding:

Review models with business stakeholders to ensure accurate representation of business concepts and rules. Misalignment between model and business understanding causes persistent issues.

Modern Data Modeling Trends

Agile Data Modeling

Iterative approach aligned with agile development:

Rather than comprehensive upfront modeling, develop models iteratively through frequent releases. Each iteration adds detail and functionality based on immediate requirements.

Metadata-Driven Approaches

Use metadata to define and manage models:

Store model definitions as metadata that drives code generation, validation, and documentation. This approach enables rapid iteration and maintains consistency.

Hybrid Modeling

Combine multiple modeling approaches:

Use relational models for transactional consistency, dimensional models for analytics, and document models for flexible content. Integration layers connect these models.

Cloud-Native Modeling

Design for cloud platform capabilities:

Leverage cloud features like automatic scaling, managed services, and serverless computing. Models may emphasize horizontal partitioning and eventual consistency over traditional approaches.

The Future of Data Modeling

AI-Assisted Modeling

Machine learning will support model development:

AI systems will analyze data sources and recommend model structures, identify relationships automatically, suggest optimizations based on query patterns, and detect modeling anti-patterns.

Semantic Modeling

Business-friendly abstraction layers:

Semantic models will provide business terminology layers above physical implementations, enabling business users to work with familiar concepts while systems map to underlying complex structures.

Real-Time Model Evolution

Dynamic schema management:

Systems will handle schema evolution automatically, adapting to changing data structures without manual intervention or downtime.

Unified Modeling

Single models supporting diverse workloads:

Converged architectures will support transactional, analytical, and operational workloads through unified models, eliminating need for separate transactional and analytical modeling.

Data modeling remains essential despite evolving technology and approaches. Whether explicit through formal methodology or implicit in application code, every system has a data model that determines how effectively it supports business requirements. Investing in thoughtful data modeling pays dividends in system quality, maintainability, and ability to evolve with changing business needs.

Platforms like FireAI abstract data model complexity by providing natural language interfaces that automatically map business questions to underlying data structures, enabling users to work with data without understanding technical model details.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

BI Fundamentals

Foundational guides on business intelligence, analytics architecture, self-service BI, and core data concepts.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

A data model is an abstract representation defining how data elements relate to each other and to real-world entities, specifying structure, relationships, constraints, and semantics. It provides blueprints for database design, ensuring data is organized logically and consistently to support business and analytical requirements.

The three levels are conceptual (high-level business-focused representation), logical (detailed technology-independent specification with all entities and relationships), and physical (implementation-specific design for particular database platforms with optimization). Each serves different purposes and audiences.

Relational modeling organizes data into normalized tables for transactional systems, eliminating redundancy through normal forms. Dimensional modeling structures data around business processes with fact and dimension tables optimized for analytical queries, often denormalized for performance. Each suits different use cases.

A star schema is a dimensional modeling approach with central fact tables containing measurements connected directly to dimension tables containing descriptive attributes. This simple structure optimizes analytical query performance and is intuitive for business users, making it the most common data warehouse design.

Entities are objects about which data is collected (customers, products). Attributes are properties of entities (customer name, product price). Relationships are associations between entities (customers place orders). These components form the foundation of data models at all abstraction levels.

Normalization is the process of organizing data to reduce redundancy and dependency by dividing tables and defining relationships between them. Normal forms (1NF, 2NF, 3NF) progressively eliminate different types of redundancy, ensuring data consistency and integrity in relational models.

Star schema has denormalized dimension tables directly connected to fact tables for simple structure and fast queries. Snowflake schema normalizes dimensions into subdimension tables, reducing redundancy but adding complexity and joins. Star optimizes performance, snowflake optimizes storage and maintenance.

Best practices include understanding business requirements thoroughly, choosing appropriate abstraction levels, maintaining consistency through naming conventions, documenting decisions comprehensively, balancing normalization with performance needs, planning for change and evolution, and validating models with stakeholders.

Logical models define detailed data structure independently of technology, specifying entities, attributes, and relationships without implementation details. Physical models specify exactly how logical models will be implemented in specific database platforms, including tables, indexes, and performance optimizations.

The future includes AI-assisted modeling with automatic structure recommendations, semantic modeling providing business-friendly abstraction layers, real-time model evolution handling schema changes automatically, and unified modeling supporting transactional and analytical workloads through converged architectures.

Related Questions In This Topic

What is a Data Warehouse? Definition, Architecture, and Benefits

A data warehouse is a centralized repository that stores structured data from multiple sources optimized for analytical queries and business intelligence. Learn how data warehouses work, which architecture to use, and how they enable efficient reporting and data-driven decision-making.

What is Data Blending? Definition, Benefits, and Examples

Data blending combines data from multiple sources without traditional database joins, enabling analysis across disparate systems. Learn how data blending works, which benefits it provides, and see examples of flexible cross-source analytics.

What is Metadata in Analytics? Types, Benefits, and Best Practices

Metadata in analytics is descriptive information about datasets that provides context, structure, and meaning. Learn how metadata works, which types exist (technical, business, operational), and discover best practices for metadata management.

What is Data Democratization? Benefits, Challenges, and Implementation Guide

Data democratization enables all employees to access and analyze business data without technical barriers. Learn how data democratization works, which benefits it provides, and how to implement it to transform organizations into data-driven cultures.

Related Guides From Our Blog

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

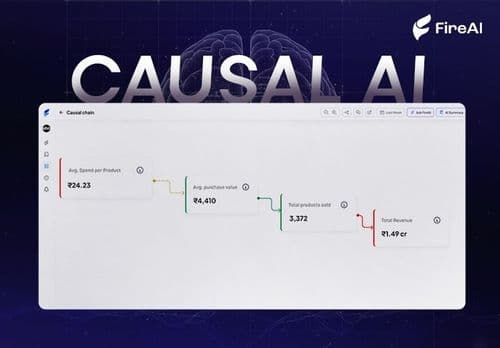

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.

Why Your Data Presentations Don’t Work And How To Fix Them With an Insight-First Framework

Most companies drown leaders in data but starve them of decisions — because they present metrics instead of insights. This post reveals the consultant’s insight-first framework (and the 15 rules top firms use) to turn dashboards into decisive action.