What is Data Blending? Definition, Benefits, and Examples

Quick Answer

Data blending is the technique of combining data from multiple sources for analysis without physically joining them in a database or data warehouse. It brings together information from disparate systems at query time, enabling analysts to create unified views across databases, spreadsheets, cloud applications, and other sources without requiring formal data integration infrastructure.

Data blending is the technique of combining data from multiple sources for analysis without physically joining them in a database or data warehouse. It brings together information from disparate systems at query time, enabling analysts to create unified views across databases, spreadsheets, cloud applications, and other sources without requiring formal data integration infrastructure.

Data blending emerged to address the reality that business data exists across numerous systems, formats, and platforms that may never consolidate into unified databases. By enabling analysis across these disparate sources without formal integration, data blending provides analytical flexibility that complements traditional data warehousing approaches. Data blending enables self-service BI by allowing business users to combine data from multiple sources without ETL processes or database expertise.

What is Data Blending?

Data blending is the analytical technique of combining data from multiple sources dynamically during analysis, rather than through predefined database joins or ETL processes. It allows analysts to bring together information from different systems based on common dimensions or keys, creating unified analytical views without requiring database-level integration.

Unlike traditional data integration that physically moves and joins data before analysis, data blending performs integration at query time. Analysts define relationships between datasets within analytical tools, and the blending engine combines data on-the-fly to support specific analyses. This approach provides agility for exploratory analysis and situations where formal integration is impractical or unnecessary.

Core Characteristics

Query-Time Integration: Data combination happens during analysis rather than through pre-built ETL pipelines, enabling flexible exploration.

Relationship-Based: Analysts define how datasets relate through common fields, typically dimensions like date, customer ID, or product code.

Source Agnostic: Blending works across diverse source types including databases, files, cloud applications, and APIs without requiring uniform platforms.

Analyst-Driven: Business analysts define blending logic through visual interfaces without requiring database or ETL development.

Temporary Combination: Blended data exists only for specific analysis sessions rather than as persistent integrated datasets.

How Data Blending Works

Defining Relationships

Data blending begins by establishing how datasets relate:

Primary Source: Analysts designate one dataset as the primary source, providing the base dimensional structure for the analysis.

Secondary Sources: Additional datasets join to the primary source based on common fields, enriching the analysis with supplementary data.

Join Keys: Analysts specify which fields establish relationships between sources, such as matching customer IDs or date values.

Join Types: Define how records combine, including left joins that keep all primary source records or inner joins that include only matched records.

Data Retrieval

Blending engines retrieve data from each source:

Parallel Queries: Blending tools simultaneously query multiple sources to minimize total retrieval time.

Partial Extraction: Only fields and records needed for specific analysis are retrieved rather than full datasets.

Caching: Retrieved data is temporarily cached to support iterative analysis without repeated source queries.

Incremental Refresh: Smart caching strategies refresh only changed data when analyses are repeated.

Data Combination

Blending engines merge retrieved data:

Aggregation Matching: When sources exist at different granularity levels, blending engines automatically aggregate or disaggregate to match.

Null Handling: Manage missing data when secondary sources lack records corresponding to primary source entries.

Calculation Reconciliation: Ensure calculations respect appropriate granularity given combined data from multiple sources.

Performance Optimization: Blending engines optimize combination logic to maintain acceptable query response times.

Data Blending vs. Traditional Integration

Data Blending

Implementation: Analyst-driven through visual interfaces in BI tools without IT involvement.

Timing: Integration happens at query time during specific analyses.

Scope: Combines data for specific analytical questions rather than comprehensive integration.

Flexibility: Easy to change relationships and add new sources as analytical needs evolve.

Governance: Less formal control over how data combines, with integration logic defined per analysis.

Performance: May exhibit slower query performance compared to pre-integrated data, depending on data volume.

Traditional Integration (ETL/Data Warehouse)

Implementation: IT-driven through formal ETL development and database design.

Timing: Integration happens before analysis through scheduled batch processes.

Scope: Comprehensive integration supporting wide range of analytical requirements.

Flexibility: Changes to integration logic require development cycles and testing.

Governance: Centralized control ensures consistent integration logic and data quality rules.

Performance: Optimized query performance through pre-computed joins and aggregations.

When to Use Data Blending

Exploratory Analysis

When analytical requirements are uncertain or evolving:

Analysts exploring new data sources or investigating ad-hoc questions benefit from blending's flexibility. Rather than waiting for formal integration development, they can quickly combine relevant datasets to test hypotheses and discover insights.

Infrequently Used Combinations

When specific data combinations serve narrow analytical purposes:

If an analysis requires combining sources only occasionally, formal integration may be overkill. Blending provides the capability when needed without the overhead of maintaining integration infrastructure for infrequent use.

External Data Sources

When incorporating data from sources outside organizational control:

Third-party data feeds, market research reports, or partner-provided datasets often exist outside enterprise data architectures. Blending enables their incorporation without adding them to warehouses.

Proof of Concept

When evaluating potential data sources before committing to integration:

Blending allows quick assessment of whether external data sources provide analytical value, informing decisions about formal integration investments.

Complementing Data Warehouses

When extending warehouse data with supplemental information:

Organizations with established data warehouses use blending to augment warehouse data with additional sources without expanding warehouse scope, combining the benefits of both approaches.

Data Blending Implementation

Blending in Tableau

Tableau pioneered mainstream data blending:

Analysts connect to multiple data sources, designate one as primary, and Tableau automatically identifies potential linking fields based on name and data type matching. Users confirm relationships and Tableau blends data at visualization generation time.

Strengths: Intuitive interface, automatic relationship detection, works with virtually any data source.

Limitations: Performance degrades with very large secondary sources, and complex many-to-many relationships can be challenging.

Blending in Power BI

Power BI uses relationship-based modeling:

The Power Query interface enables data import from multiple sources. Analysts define relationships in the data model, and DAX formulas reference across tables. While technically relationship-based modeling rather than pure blending, it provides similar analyst-driven integration capabilities.

Strengths: Robust handling of complex relationships, strong performance through in-memory processing.

Limitations: Requires importing data rather than live query blending in many scenarios.

Blending in Looker

Looker approaches blending through its modeling layer:

LookML defines relationships between datasets, enabling cross-source analysis. While requiring some technical modeling, it provides powerful blending capabilities with governed relationship definitions.

Strengths: Governed blending logic reusable across analyses, strong performance optimization.

Limitations: Requires LookML development rather than pure self-service blending.

Custom Blending Solutions

Organizations sometimes build custom blending capabilities:

Using programming languages like Python or R, data engineers create scripts that combine sources programmatically. Jupyter notebooks provide environments where analysts interact with blended data through code.

Strengths: Maximum flexibility, no tool limitations on data sources or combination logic.

Limitations: Requires programming skills, lacks visual interface simplicity of BI tool blending.

Best Practices for Data Blending

Choose Appropriate Primary Sources

Select primary sources carefully:

The primary dataset determines the grain and structure of blended results. Choose sources that represent the fundamental dimension of analysis, such as customer dimension for customer analysis or product dimension for product analysis.

Validate Relationships

Confirm data relationships before trusting blended results:

Check that linking fields contain matching values and appropriate cardinality. Mismatched relationships produce incorrect results that may not be immediately obvious.

Manage Granularity Differences

Be explicit about aggregation when sources exist at different detail levels:

When blending daily transaction data with monthly budget data, understand that budget values will repeat for each day within months. Design analyses accounting for this granularity difference.

Document Blending Logic

Maintain clear documentation of how sources combine:

Since blending logic lives in analyses rather than centralized repositories, document relationships, assumptions, and business rules to support collaboration and maintenance.

Consider Performance Impact

Monitor query response times and optimize when necessary:

Large secondary sources can significantly slow blending queries. Consider filtering secondary sources, pre-aggregating when appropriate, or moving frequently used blends to formal integration.

Implement Appropriate Security

Ensure blending respects data access policies:

When blending combines sources with different security requirements, implement controls that prevent inappropriate data exposure through blended views.

Challenges and Limitations

Performance at Scale

Blending query-time integration can be slow with large datasets:

Mitigation: Filter data before blending, use extracts rather than live connections, pre-aggregate when appropriate, and consider moving frequent blends to formal integration.

Limited Complex Join Support

Some blending tools struggle with sophisticated join scenarios:

Mitigation: Simplify data relationships before blending, perform complex joins in databases before blending, or use programming-based blending for complex scenarios.

Governance Challenges

Analyst-driven blending can create inconsistent analytical views:

Mitigation: Establish standards for common blending scenarios, implement review processes for critical analyses, and document approved blending approaches.

Data Quality Concerns

Blending may not include the data quality validation present in formal integration:

Mitigation: Implement data quality checks within blending workflows, validate source data quality before blending, and monitor blended result reasonableness.

Calculation Complexity

Calculations across blended data require careful attention to aggregation:

Mitigation: Test calculations thoroughly, use blending tool features that handle aggregation automatically when available, and document calculation logic clearly.

The Future of Data Blending

AI-Enhanced Blending

Machine learning will improve blending automation:

AI systems will automatically identify potential relationships between sources, detect data quality issues that affect blending, and recommend optimal primary source selection based on analytical objectives.

Semantic Layer Integration

Unified business definitions will improve blending consistency:

Semantic layers that provide common business term definitions across sources will enable more consistent blending by ensuring analysts interpret fields identically regardless of source.

Performance Optimization

Advanced query optimization will reduce blending performance penalties:

Query engines will more intelligently optimize cross-source queries, push computations to source systems when appropriate, and leverage caching strategies that minimize repeated source access.

Governed Self-Service

Balance analytical flexibility with appropriate control:

Future blending platforms will combine self-service flexibility with governance frameworks that ensure compliance and consistency without eliminating analytical agility.

Real-Time Blending

Extend blending to streaming data sources:

As real-time analytics become more common, blending capabilities will extend to streaming sources, enabling cross-source analysis that includes both historical and real-time data.

Data blending provides essential analytical flexibility in environments where data necessarily remains distributed across multiple systems. While not replacing formal data integration for high-volume, repeated analyses, blending enables exploratory analysis and special-purpose data combinations that would be impractical through traditional approaches.

Platforms like FireAI support blended analysis by enabling natural language queries across multiple data sources, automatically determining appropriate data relationships and generating queries that combine information without requiring users to understand blending mechanics or define relationships manually.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

BI Fundamentals

Foundational guides on business intelligence, analytics architecture, self-service BI, and core data concepts.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

Data blending is the technique of combining data from multiple sources for analysis without physically joining them in a database. It brings together information from disparate systems at query time, enabling analysts to create unified views across databases, spreadsheets, and cloud applications without formal integration infrastructure.

Data blending combines sources at query time during specific analyses through analyst-defined relationships in BI tools. Data integration physically combines sources beforehand through ETL processes into data warehouses. Blending offers flexibility for exploratory analysis, while integration provides optimized performance for repeated queries.

Data blending works by analysts defining relationships between datasets through common fields like customer ID or date. Blending engines retrieve data from multiple sources, combine them based on specified relationships, handle granularity differences, and present unified views for analysis without creating permanent integrated datasets.

Use data blending for exploratory analysis with uncertain requirements, infrequently used data combinations, incorporating external data sources, proof-of-concept evaluation of new sources, and complementing data warehouses with supplemental information without expanding warehouse scope.

Tools supporting data blending include Tableau with automatic relationship detection, Power BI through relationship-based modeling, Looker with LookML-defined cross-source relationships, and custom solutions using Python or R for programmatic data combination in analytical environments.

Limitations include performance degradation with large datasets compared to pre-integrated data, challenges with complex join scenarios, governance concerns from decentralized integration logic, potential data quality issues without formal validation, and calculation complexity requiring careful aggregation handling.

Best practices include choosing appropriate primary sources that determine analysis grain, validating relationships before trusting results, managing granularity differences explicitly, documenting blending logic comprehensively, monitoring performance impact and optimizing when necessary, and implementing security controls respecting data access policies.

Data blending and data mashup are largely synonymous terms describing the combination of multiple sources for analysis. Blending is more commonly used in business intelligence contexts, while mashup has origins in web application development, but both refer to flexible, analyst-driven data combination approaches.

Modern data blending increasingly supports real-time data sources, enabling cross-source analysis combining historical warehouse data with streaming operational data. However, performance considerations and tool capabilities vary, with real-time blending generally more challenging than blending static sources.

The future includes AI-enhanced blending with automatic relationship identification, semantic layer integration for consistent definitions, performance optimization reducing query penalties, governed self-service balancing flexibility with control, and real-time blending extending capabilities to streaming sources.

Related Questions In This Topic

What is a Data Warehouse? Definition, Architecture, and Benefits

A data warehouse is a centralized repository that stores structured data from multiple sources optimized for analytical queries and business intelligence. Learn how data warehouses work, which architecture to use, and how they enable efficient reporting and data-driven decision-making.

What is a Data Lake? Definition, Benefits, and Comparison Guide

A data lake is a centralized repository that stores raw, unstructured, and semi-structured data at scale in native formats. Learn how data lakes work, which benefits they provide, and compare data lakes vs data warehouses for your analytics needs.

What is Data Lineage? Definition, Benefits, and Use Cases

Data lineage tracks data flow from sources through transformations to consumption, showing origins, processing steps, and dependencies. Learn how data lineage works, which benefits it provides, and how it supports governance, troubleshooting, and impact analysis.

What is Data Democratization? Benefits, Challenges, and Implementation Guide

Data democratization enables all employees to access and analyze business data without technical barriers. Learn how data democratization works, which benefits it provides, and how to implement it to transform organizations into data-driven cultures.

Related Guides From Our Blog

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

How a Modern Analytics Platform Transforms Business Intelligence

Why faster decision-making, real-time analytics, and AI-driven intelligence separate market leaders from laggards—and how Fire AI closes the gap between data and action.

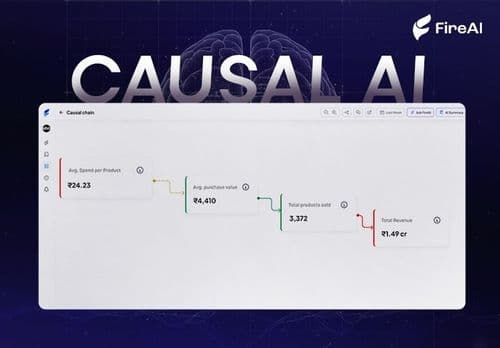

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.