What is a Data Lake? Definition, Benefits, and Comparison Guide

Quick Answer

A data lake is a centralized repository that stores vast amounts of raw data in its native format, including structured, semi-structured, and unstructured data. Unlike data warehouses that require predefined schemas, data lakes use a schema-on-read approach, storing data first and applying structure during analysis, enabling flexible exploration and diverse analytical workloads including machine learning and big data processing.

A data lake is a centralized repository that stores vast amounts of raw data in its native format, including structured, semi-structured, and unstructured data. Unlike data warehouses that require predefined schemas, data lakes use a schema-on-read approach, storing data first and applying structure during analysis, enabling flexible exploration and diverse analytical workloads including machine learning and big data processing.

Data lakes emerged from the need to store and analyze diverse data types at scales beyond traditional data warehouse capabilities. By accepting data in any format without requiring upfront transformation, data lakes enable organizations to capture valuable information that might otherwise be discarded due to processing complexity or uncertain analytical value. Data lakes support business intelligence platforms that need flexible data access.

What is a Data Lake?

A data lake is a centralized storage repository designed to hold massive volumes of raw data in its native format until needed for analysis. Unlike traditional data warehouses that impose structure during data ingestion, data lakes preserve data in original forms, allowing analysts to apply schemas and transformations during query time based on specific analytical requirements.

This architectural approach provides significant flexibility for exploratory analysis, machine learning workloads, and use cases where the analytical requirements may not be fully known at the time of data collection. Data lakes support structured data from relational databases, semi-structured data like JSON and XML, unstructured data including text documents and images, and streaming data from IoT devices and application logs.

Core Principles

Schema-on-Read: Data is stored in raw format and structure is applied during analysis, allowing multiple analytical approaches on the same dataset.

Scalability: Designed to handle petabyte-scale data volumes using distributed storage systems that scale horizontally across commodity hardware.

Format Flexibility: Accepts any data type without requiring transformation or standardization before storage, preserving original data fidelity.

Cost Efficiency: Leverages inexpensive object storage rather than specialized database systems, dramatically reducing storage costs per terabyte.

Decoupled Storage and Compute: Separates data storage from processing engines, allowing different analytical tools to work with the same data.

Data Lake Architecture

Storage Layer

The foundation stores raw data using distributed file systems or cloud object storage:

Distributed File Systems: Hadoop HDFS provides fault-tolerant storage across clusters of commodity servers, replicating data for reliability.

Cloud Object Storage: Services like Amazon S3, Azure Data Lake Storage, and Google Cloud Storage offer virtually unlimited scalability with built-in redundancy and durability.

Data Organization: Files are typically organized in hierarchical folder structures representing business domains, data sources, or time periods, with metadata systems tracking content and lineage.

Processing Layer

Multiple processing engines operate on data lake storage:

Batch Processing: Frameworks like Apache Spark and Hadoop MapReduce process large datasets through distributed computation.

Stream Processing: Technologies like Apache Kafka and Apache Flink handle real-time data ingestion and analysis.

SQL Engines: Query engines like Presto, Athena, and Databricks SQL enable SQL-based analysis directly on lake data.

Machine Learning: Platforms like TensorFlow and PyTorch access lake data for training and inference workloads.

Metadata and Catalog Layer

Metadata management systems provide data discoverability and governance:

Data Catalogs: Tools like AWS Glue, Azure Purview, and Apache Atlas catalog datasets with searchable metadata, making data discoverable across the organization.

Schema Registry: Centralized schema definitions enable consistent interpretation of semi-structured data formats.

Data Lineage: Track data origins, transformations, and dependencies to support governance and troubleshooting.

Access and Security Layer

Controls and interfaces that govern data access:

Authentication and Authorization: Identity management systems control who can access specific datasets based on roles and permissions.

Data Encryption: Encryption at rest and in transit protects sensitive information.

Audit Logging: Comprehensive logging tracks data access and modifications for compliance and security monitoring.

Data Lake Implementation Approaches

Zone-Based Organization

Many data lakes organize data into zones representing different processing stages:

Raw Zone: Unprocessed data in original formats as received from source systems, providing an immutable historical record.

Refined Zone: Cleansed and validated data with basic quality checks applied, but still in flexible formats suitable for diverse analytical approaches.

Curated Zone: Data transformed into optimized formats for specific use cases, potentially including aggregations and denormalization for performance.

Sandbox Zone: Experimental area where analysts can create temporary datasets without impacting production data.

File Formats for Data Lakes

Parquet: Columnar storage format optimized for analytical queries, offering efficient compression and encoding schemes that reduce storage costs and improve query performance.

ORC: Optimized Row Columnar format providing high compression ratios and fast read performance, particularly popular in Hadoop ecosystems.

Avro: Row-based format with rich schema definitions ideal for streaming data and applications requiring schema evolution.

JSON: Human-readable format convenient for semi-structured data, though less storage-efficient than columnar formats.

Delta Lake: Open-source storage layer providing ACID transactions on data lakes, enabling reliability features previously available only in databases.

Data Lake Benefits

Flexibility and Agility

Data lakes accommodate diverse data types without requiring upfront schema design, enabling organizations to capture data whose analytical value may not be immediately apparent. This flexibility supports exploratory analysis where questions evolve based on discoveries.

Cost Efficiency

Storage costs for data lakes are substantially lower than traditional data warehouse storage, particularly when using cloud object storage. This economic advantage enables organizations to retain data longer and capture more comprehensive datasets.

Advanced Analytics Support

The raw, detailed data in lakes provides ideal foundations for machine learning models that benefit from large training datasets. Data scientists can access granular data without aggregations or transformations that might obscure important patterns.

Unified Data Platform

Data lakes consolidate diverse data sources into a single repository, reducing data silos and enabling cross-functional analysis. This unification simplifies data management while providing comprehensive views of organizational information.

Future-Proofing

Storing data in raw formats protects against analytical obsolescence. As new analytical techniques emerge, organizations can apply them to existing data without re-collecting information from source systems.

Data Lake Challenges

Data Swamp Risk

Without proper governance and metadata management, data lakes can devolve into data swamps where datasets are difficult to discover, understand, or trust. Preventing this requires:

Comprehensive Metadata: Document data sources, update frequencies, schemas, and business definitions.

Data Quality Monitoring: Implement automated checks that validate data quality and flag anomalies.

Access Patterns: Track which datasets are actively used versus abandoned to guide maintenance efforts.

Performance Considerations

Query performance on raw data can be slower than optimized data warehouse structures. Mitigation strategies include:

Data Partitioning: Organize files by frequently filtered dimensions like date or region to enable partition pruning during queries.

File Size Optimization: Consolidate small files and split extremely large files to balance parallelism with overhead.

Format Selection: Use columnar formats like Parquet for analytical workloads requiring only subsets of columns.

Caching Strategies: Implement result caching and materialized views for frequently accessed queries.

Security and Governance

The flexibility of data lakes creates governance challenges:

Fine-Grained Access Control: Implement column-level and row-level security to protect sensitive information while enabling broad data access.

Data Classification: Automatically identify and tag sensitive data to ensure appropriate protection.

Compliance Management: Track data lineage and retention to satisfy regulatory requirements.

Data Lake vs. Data Warehouse

When to Use a Data Lake

Data lakes excel when:

- Working with diverse data types including unstructured and semi-structured data

- Analytical requirements are exploratory or evolving

- Machine learning workloads require access to raw, granular data

- Storage costs are a primary concern given data volume

- Multiple analytical tools and frameworks need access to the same data

When to Use a Data Warehouse

Data warehouses are better suited when:

- Data is primarily structured and relational

- Analytical requirements are well-defined with known reporting needs

- Query performance is critical for user-facing dashboards

- Data consistency and quality are paramount

- Users require SQL-based business intelligence tools

Hybrid Approaches

Many organizations implement both architectures:

Data Lake as Foundation: Store all raw data in the lake, then extract and transform relevant datasets into the warehouse for optimized reporting.

Data Lakehouse: Emerging architecture combining lake flexibility with warehouse performance through technologies like Delta Lake, Apache Iceberg, and Apache Hudi that add transactional capabilities to data lakes.

Data Lake Platforms

Cloud-Native Solutions

AWS Lake Formation: Managed service for building data lakes on Amazon S3 with integrated security, cataloging, and ETL capabilities.

Azure Data Lake Storage: Scalable storage optimized for big data analytics with hierarchical namespace support and enterprise security.

Google Cloud Storage: Object storage forming the foundation for data lakes with BigQuery integration for SQL analytics.

Open-Source Technologies

Apache Hadoop: Distributed processing framework with HDFS storage, forming the foundation for many on-premises data lakes.

Delta Lake: Open-source storage layer adding reliability features to data lakes including ACID transactions and time travel.

Apache Iceberg: Table format providing warehouse-like capabilities on data lakes with schema evolution and hidden partitioning.

Apache Hudi: Data lake platform enabling record-level updates and deletes on analytical datasets.

Unified Analytics Platforms

Databricks Lakehouse: Platform combining data lake storage with warehouse capabilities and integrated machine learning.

Snowflake: Cloud data platform extending beyond warehousing to support semi-structured data with data lake-like flexibility.

Azure Synapse Analytics: Integrated analytics service combining data warehousing, big data processing, and data integration.

Best Practices for Data Lake Implementation

Establish Governance Early

Implement metadata management and data cataloging from the start. Define naming conventions, folder hierarchies, and documentation standards before accumulating large volumes of undocumented data.

Organize Data Thoughtfully

Structure data lakes using logical organization schemes that reflect business domains or data lifecycle stages. Implement consistent partitioning strategies that align with common query patterns.

Monitor Data Quality

Deploy automated data quality checks that validate incoming data against expected patterns. Track data freshness to identify stale or abandoned datasets.

Optimize for Performance

Use appropriate file formats for workload characteristics. Maintain optimal file sizes through periodic compaction. Implement partitioning schemes that enable query optimization.

Implement Security Layers

Apply encryption for data at rest and in transit. Implement role-based access controls with least-privilege principles. Maintain comprehensive audit logs of data access.

The Future of Data Lakes

Transactional Data Lakes

Technologies like Delta Lake and Apache Iceberg are bringing ACID transaction guarantees to data lakes, enabling reliable updates and deletions while maintaining analytical performance. This evolution blurs the distinction between lakes and warehouses.

AI-Powered Data Management

Machine learning will increasingly automate data lake management tasks including metadata generation, data quality monitoring, access pattern optimization, and dataset recommendation based on analytical context.

Real-Time Data Lakes

Streaming architectures enable data lakes to support near-real-time analytics, moving beyond traditional batch processing to provide fresh insights with minimal latency.

Unified Metadata

Cross-platform metadata standards will enable consistent data discovery and governance across hybrid architectures combining lakes, warehouses, and operational databases.

Data lakes have become essential infrastructure for organizations dealing with diverse data types and evolving analytical requirements. While they introduce governance challenges, proper implementation provides cost-effective, flexible foundations for comprehensive analytics and machine learning initiatives.

Platforms like FireAI make data lake content accessible to non-technical users through natural language interfaces, enabling business users to query raw data without SQL expertise while maintaining the flexibility and cost benefits that make data lakes attractive.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

BI Fundamentals

Foundational guides on business intelligence, analytics architecture, self-service BI, and core data concepts.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

A data lake is a centralized repository that stores vast amounts of raw data in its native format, including structured, semi-structured, and unstructured data. It uses a schema-on-read approach, storing data first and applying structure during analysis, enabling flexible exploration and diverse analytical workloads.

Data lakes store raw data in native formats with schema-on-read, supporting diverse data types and exploratory analysis. Data warehouses store structured, processed data with predefined schemas optimized for known queries and reporting. Lakes prioritize flexibility and cost, warehouses prioritize performance and consistency.

Benefits include flexibility to store any data type, lower storage costs compared to warehouses, support for advanced analytics and machine learning, unified data platform reducing silos, and future-proofing by preserving raw data for new analytical techniques.

A data swamp occurs when a data lake lacks proper governance and metadata management, making datasets difficult to discover, understand, or trust. Prevention requires comprehensive metadata, data quality monitoring, access pattern tracking, and clear organizational standards.

Schema-on-read is an approach where data is stored in raw format without predefined structure, and schema is applied during query time based on analytical requirements. This contrasts with schema-on-write used in data warehouses, where structure is defined before data ingestion.

Common formats include Parquet for columnar storage with efficient compression, ORC for optimized row-columnar storage, Avro for streaming data with schema evolution, JSON for semi-structured data, and Delta Lake for transactional capabilities on data lakes.

A data lakehouse combines the flexibility of data lakes with the performance and reliability of data warehouses. It uses technologies like Delta Lake, Apache Iceberg, or Apache Hudi to add ACID transactions, schema enforcement, and query optimization to data lake storage.

Cloud data lake platforms include AWS Lake Formation on Amazon S3, Azure Data Lake Storage with Azure Synapse, Google Cloud Storage with BigQuery, and Databricks Lakehouse. These services offer managed data lake capabilities with integrated security, cataloging, and analytics.

Prevention requires establishing governance early with metadata management and data cataloging, organizing data thoughtfully with logical structures, monitoring data quality through automated checks, implementing security layers, and maintaining documentation standards throughout the data lifecycle.

The future includes transactional capabilities through lakehouse architectures, AI-powered data management automating metadata and quality tasks, real-time data lakes supporting streaming analytics, unified metadata enabling cross-platform governance, and convergence with warehouse capabilities for integrated analytics.

Related Questions In This Topic

What is Data Democratization? Benefits, Challenges, and Implementation Guide

Data democratization enables all employees to access and analyze business data without technical barriers. Learn how data democratization works, which benefits it provides, and how to implement it to transform organizations into data-driven cultures.

What is a Data Warehouse? Definition, Architecture, and Benefits

A data warehouse is a centralized repository that stores structured data from multiple sources optimized for analytical queries and business intelligence. Learn how data warehouses work, which architecture to use, and how they enable efficient reporting and data-driven decision-making.

What is Data Blending? Definition, Benefits, and Examples

Data blending combines data from multiple sources without traditional database joins, enabling analysis across disparate systems. Learn how data blending works, which benefits it provides, and see examples of flexible cross-source analytics.

What is Data Lineage? Definition, Benefits, and Use Cases

Data lineage tracks data flow from sources through transformations to consumption, showing origins, processing steps, and dependencies. Learn how data lineage works, which benefits it provides, and how it supports governance, troubleshooting, and impact analysis.

Related Guides From Our Blog

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

How a Modern Analytics Platform Transforms Business Intelligence

Why faster decision-making, real-time analytics, and AI-driven intelligence separate market leaders from laggards—and how Fire AI closes the gap between data and action.

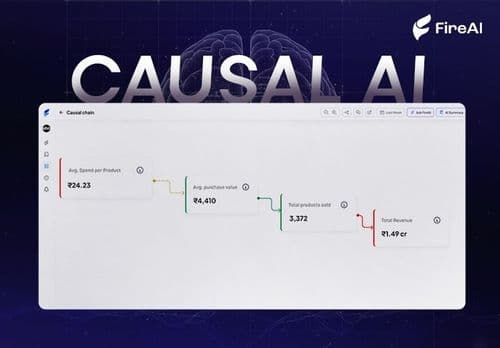

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.