What is Machine Learning in Analytics? Methods, Examples, and Applications

Quick Answer

Machine learning in analytics uses algorithms that automatically learn from data to identify patterns, make predictions, and generate insights without explicit programming. ML transforms traditional analytics by enabling automated discovery, predictive modeling, and intelligent decision-making across vast datasets.

Machine learning in analytics uses algorithms that automatically learn from data to identify patterns, make predictions, and generate insights without explicit programming. ML transforms traditional analytics by enabling automated discovery, predictive modeling, and intelligent decision-making across vast datasets.

Machine learning has revolutionized the field of analytics, introducing intelligent systems that can learn from data, adapt to new information, and make predictions with increasing accuracy. This transformative technology bridges the gap between traditional statistical analysis and modern AI-powered business intelligence, enabling organizations to extract deeper value from their data and make more informed decisions. ML powers augmented analytics systems that automate insight discovery.

What is Machine Learning in Analytics?

Machine learning in analytics refers to the application of algorithms and statistical models that enable computer systems to automatically learn from data and improve their performance without being explicitly programmed. These algorithms identify patterns, relationships, and insights in data that might be too complex or subtle for traditional analytical methods to detect.

ML transforms analytics from a manual, rule-based process to an automated, intelligent system that can adapt, learn, and evolve. By processing vast amounts of data and identifying non-obvious relationships, machine learning enables predictive analytics, automated insights generation, and intelligent decision support.

Core Characteristics

Automated Learning: Systems improve performance through data exposure without manual reprogramming.

Pattern Discovery: Identifies complex relationships and patterns in data beyond human capability.

Scalability: Handles massive datasets and complex calculations efficiently.

Adaptability: Models evolve and improve as new data becomes available.

Predictive Power: Generates forecasts and insights for future outcomes.

How Machine Learning Works in Analytics

Learning Paradigms

Different approaches to machine learning:

Supervised Learning: Training models on labeled data where the correct answers are known.

- Classification: Categorizing data into predefined classes (spam detection, customer segmentation)

- Regression: Predicting continuous numerical values (sales forecasting, price prediction)

- Examples: Decision trees, random forests, neural networks, support vector machines

Unsupervised Learning: Finding patterns in data without predefined labels.

- Clustering: Grouping similar data points (customer segmentation, anomaly detection)

- Dimensionality Reduction: Simplifying data while preserving important information

- Association Mining: Discovering relationships between variables

- Examples: K-means clustering, principal component analysis, autoencoders

Semi-Supervised Learning: Combining labeled and unlabeled data for training.

- Leverages limited labeled data with abundant unlabeled data

- Improves model accuracy with less annotation effort

- Useful in scenarios with scarce labeled examples

Reinforcement Learning: Learning through interaction and feedback.

- Agent learns optimal actions through trial and error

- Maximizes rewards in dynamic environments

- Applications in recommendation systems and automated decision-making

Model Development Process

Structured approach to building ML models:

Data Preparation: Cleaning, transforming, and structuring data for modeling.

- Feature Engineering: Creating relevant input variables from raw data

- Data Cleaning: Handling missing values, outliers, and inconsistencies

- Normalization: Scaling data to comparable ranges

- Train/Test Split: Dividing data for model training and validation

Model Training: Teaching algorithms to recognize patterns.

- Algorithm Selection: Choosing appropriate ML techniques for the task

- Hyperparameter Tuning: Optimizing model configuration parameters

- Cross-Validation: Ensuring model generalizability across different data subsets

- Performance Monitoring: Tracking accuracy, precision, and other metrics

Model Evaluation: Assessing model effectiveness and reliability.

- Accuracy Metrics: Measuring correct predictions against total predictions

- Precision and Recall: Evaluating quality of positive predictions

- ROC Curves: Visualizing trade-offs between true and false positives

- Confusion Matrices: Detailed breakdown of prediction performance

Model Deployment: Integrating trained models into production systems.

- Model Serving: Making predictions available through APIs and interfaces

- Monitoring: Tracking model performance in real-world applications

- Retraining: Updating models as new data becomes available

- Version Control: Managing different model versions and rollback capabilities

Key Machine Learning Techniques in Analytics

Classification Algorithms

Categorizing data into discrete classes:

- Logistic Regression: Predicting binary outcomes and probabilities

- Decision Trees: Creating rule-based classification models

- Random Forest: Ensemble of decision trees for improved accuracy

- Support Vector Machines: Finding optimal boundaries between classes

- Neural Networks: Deep learning models for complex classification tasks

Regression Algorithms

Predicting continuous numerical values:

- Linear Regression: Modeling relationships between variables

- Polynomial Regression: Capturing non-linear relationships

- Ridge and Lasso Regression: Regularized regression to prevent overfitting

- Gradient Boosting: Ensemble methods for accurate predictions

- Time Series Models: Specialized regression for temporal data

Clustering Algorithms

Grouping similar data points:

- K-Means Clustering: Partitioning data into k distinct groups

- Hierarchical Clustering: Building nested clusters through agglomeration

- DBSCAN: Density-based clustering for arbitrary-shaped clusters

- Gaussian Mixture Models: Probabilistic clustering approach

- Spectral Clustering: Using graph theory for complex clustering tasks

Dimensionality Reduction

Simplifying high-dimensional data:

- Principal Component Analysis (PCA): Linear dimensionality reduction

- t-SNE: Non-linear technique for visualization and exploration

- Autoencoders: Neural network-based dimensionality reduction

- Feature Selection: Selecting most relevant variables

- Manifold Learning: Preserving local structure in reduced dimensions

Applications in Business Analytics

Customer Analytics

Understanding and predicting customer behavior:

- Customer Segmentation: Grouping customers by behavior and preferences

- Churn Prediction: Identifying customers likely to stop using services

- Lifetime Value Prediction: Forecasting long-term customer value

- Personalization: Recommending products based on individual preferences

- Fraud Detection: Identifying suspicious transaction patterns

Operational Analytics

Optimizing business operations:

- Demand Forecasting: Predicting product demand for inventory management

- Quality Control: Detecting defects and quality issues in manufacturing

- Supply Chain Optimization: Predicting disruptions and optimizing logistics

- Energy Consumption: Forecasting usage patterns for cost optimization

- Maintenance Prediction: Anticipating equipment failures for preventive maintenance

Financial Analytics

Supporting financial decision-making:

- Credit Scoring: Assessing borrower risk and creditworthiness

- Market Prediction: Forecasting stock prices and market trends

- Fraud Detection: Identifying fraudulent transactions and activities

- Risk Assessment: Evaluating portfolio risk and investment opportunities

- Algorithmic Trading: Automated trading strategies based on market patterns

Marketing Analytics

Optimizing marketing effectiveness:

- Campaign Optimization: Predicting campaign performance and ROI

- Lead Scoring: Identifying prospects most likely to convert

- Content Personalization: Recommending content based on user behavior

- Attribution Modeling: Understanding which marketing channels drive conversions

- A/B Testing Optimization: Automatically optimizing test variations

ML Model Management and Governance

Model Lifecycle Management

Systematic approach to model maintenance:

- Version Control: Tracking model versions and changes over time

- Model Registry: Centralized repository for model storage and metadata

- Deployment Pipelines: Automated processes for model deployment and updates

- Rollback Capabilities: Ability to revert to previous model versions

- Audit Trails: Complete history of model changes and decisions

Performance Monitoring

Ensuring ongoing model effectiveness:

- Accuracy Tracking: Monitoring prediction accuracy over time

- Drift Detection: Identifying when model performance degrades

- Bias Monitoring: Detecting unfair or biased model behavior

- Latency Monitoring: Tracking prediction response times

- Resource Usage: Monitoring computational requirements

Ethical Considerations

Ensuring responsible ML deployment:

- Bias Detection: Identifying and mitigating unfair model behavior

- Explainability: Understanding and explaining model decisions

- Privacy Protection: Safeguarding sensitive data in model training

- Fairness Assessment: Ensuring equitable treatment across user groups

- Transparency: Making model behavior and limitations clear

Challenges and Solutions

Data Quality Issues

Ensuring reliable model training:

- Data Cleaning: Removing noise, errors, and inconsistencies

- Feature Engineering: Creating meaningful input variables

- Data Imbalance: Handling uneven class distributions

- Missing Data: Appropriate imputation and handling strategies

- Outlier Management: Dealing with extreme values that could skew models

Model Overfitting

Preventing models that perform well on training data but poorly on new data:

- Cross-Validation: Testing models on multiple data subsets

- Regularization: Adding constraints to prevent complexity

- Early Stopping: Stopping training when performance begins to degrade

- Ensemble Methods: Combining multiple models to improve generalization

- Simpler Models: Using less complex algorithms when appropriate

Computational Complexity

Managing resource requirements for large-scale ML:

- Distributed Computing: Using frameworks like Apache Spark for scalability

- Model Optimization: Reducing model size and computational requirements

- Edge Computing: Running models on resource-constrained devices

- Model Compression: Techniques to reduce model size without losing accuracy

- Efficient Algorithms: Choosing computationally efficient ML approaches

Interpretability Challenges

Understanding complex model decisions:

- Model Explainability: Techniques to understand model reasoning

- Feature Importance: Identifying which variables influence predictions

- Partial Dependence Plots: Visualizing relationships between features and predictions

- SHAP Values: Measuring contribution of each feature to individual predictions

- Simplified Models: Using interpretable models when transparency is critical

Implementation Best Practices

Start with Clear Objectives

Define specific analytical goals:

- Business Problem: Clearly articulate the problem ML should solve

- Success Metrics: Define measurable outcomes for model performance

- Data Availability: Ensure sufficient and appropriate data for modeling

- Resource Requirements: Assess computational and expertise needs

- Timeline Expectations: Set realistic development and deployment timelines

Choose Appropriate Tools and Platforms

Select technology stack based on needs:

- Development Frameworks: Scikit-learn, TensorFlow, PyTorch for model building

- AutoML Platforms: Automated machine learning for faster development

- MLOps Platforms: Tools for model deployment, monitoring, and management

- Cloud Services: AWS SageMaker, Google AI Platform, Azure ML for scalable solutions

- Integration Tools: APIs and connectors for existing systems

Ensure Data Readiness

Prepare high-quality data for modeling:

- Data Collection: Gather comprehensive and representative datasets

- Data Labeling: Ensure accurate labels for supervised learning tasks

- Feature Engineering: Create meaningful input variables from raw data

- Data Validation: Implement quality checks and validation procedures

- Data Pipeline: Establish automated processes for ongoing data preparation

Validate and Test Models

Ensure model reliability and performance:

- Cross-Validation: Test models on multiple data subsets

- A/B Testing: Compare model performance against baselines

- Shadow Testing: Test new models alongside existing systems

- Performance Benchmarks: Compare against industry standards

- Edge Case Testing: Validate behavior with unusual or extreme inputs

Monitor and Maintain Models

Ensure long-term model effectiveness:

- Performance Tracking: Monitor accuracy, latency, and other metrics

- Drift Detection: Identify when models need retraining

- Automated Retraining: Set up pipelines for model updates

- Version Management: Maintain model lineage and rollback capabilities

- Continuous Improvement: Regularly assess and enhance model performance

The Future of Machine Learning in Analytics

Automated Machine Learning (AutoML)

Democratizing ML development:

- Automated Feature Engineering: AI-driven feature creation and selection

- Neural Architecture Search: Automated design of neural network architectures

- Hyperparameter Optimization: Intelligent tuning of model parameters

- Model Selection: Automatic choice of best algorithms for specific tasks

- Pipeline Automation: End-to-end automated ML workflows

Explainable AI (XAI)

Making ML more transparent and trustworthy:

- Model Interpretability: Techniques to understand complex model decisions

- Causal Inference: Understanding cause-and-effect relationships

- Counterfactual Explanations: Explaining what would happen under different conditions

- Uncertainty Quantification: Expressing confidence in model predictions

- Bias Detection: Automated identification and mitigation of unfair behavior

Edge and Federated Learning

Distributed ML capabilities:

- Edge Computing: Running ML models on devices and sensors

- Federated Learning: Training models across distributed data sources

- Privacy-Preserving ML: Techniques that protect data privacy during training

- Decentralized Intelligence: Moving intelligence closer to data sources

- Real-Time Adaptation: Models that learn and adapt in real-time

Multimodal and Cross-Domain Learning

Handling diverse data types:

- Multimodal Learning: Processing text, images, audio, and sensor data together

- Transfer Learning: Applying knowledge from one domain to another

- Meta-Learning: Learning to learn more efficiently across tasks

- Few-Shot Learning: Training effective models with limited data

- Continual Learning: Models that accumulate knowledge over time

Machine learning has fundamentally transformed analytics, enabling organizations to extract deeper insights, make more accurate predictions, and automate complex decision-making processes. By learning from data patterns that would be impossible for humans to detect manually, ML-powered analytics provides a competitive advantage in an increasingly data-driven business environment.

Platforms like FireAI leverage machine learning to provide intelligent analytics capabilities, enabling users to discover insights, make predictions, and drive data-driven decisions with unprecedented speed and accuracy.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

AI Analytics

Guides on natural language querying, AI-powered analytics, forecasting, anomaly detection, and automated insights.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

Machine learning in analytics uses algorithms that automatically learn from data to identify patterns, make predictions, and generate insights without explicit programming. ML transforms traditional analytics by enabling automated discovery, predictive modeling, and intelligent decision-making across vast datasets.

Main types include supervised learning (learning from labeled data for prediction), unsupervised learning (finding patterns in unlabeled data), semi-supervised learning (combining labeled and unlabeled data), and reinforcement learning (learning through trial-and-error with rewards). Each type serves different analytical purposes.

Traditional analytics relies on predefined rules and statistical methods, while machine learning automatically discovers patterns and relationships in data. ML can handle complex, non-linear relationships, scale to massive datasets, and improve performance over time through learning, capabilities beyond traditional statistical approaches.

Common algorithms include linear regression for prediction, decision trees for classification, k-means clustering for grouping, neural networks for complex patterns, random forests for ensemble learning, support vector machines for classification boundaries, and gradient boosting for improved predictions through iterative refinement.

ML is applied for customer churn prediction, demand forecasting, fraud detection, recommendation systems, customer segmentation, quality control, predictive maintenance, credit scoring, market trend analysis, and automated decision-making across various business functions and industries.

ML requires quality data including historical records, labeled examples for supervised learning, diverse examples for robust models, sufficient volume for statistical significance, relevant features for prediction, and clean data free from errors and inconsistencies. Data quality directly impacts model accuracy and reliability.

Challenges include data quality and preparation requirements, model interpretability issues, computational resource needs, overfitting risks, concept drift where models become outdated, bias and fairness concerns, and the need for specialized expertise for model development and maintenance.

Yes, through AutoML platforms that automate model selection, feature engineering, hyperparameter tuning, and deployment. However, human expertise is still valuable for problem formulation, result interpretation, business context integration, and ensuring ethical and responsible ML implementation.

Models are evaluated using accuracy, precision, recall, F1-score, AUC-ROC for classification tasks, and MSE, RMSE, MAE for regression. Cross-validation ensures generalizability, while business metrics like ROI and impact assessment measure real-world effectiveness.

The future includes AutoML for automated model development, explainable AI for transparency, edge computing for real-time processing, multimodal learning for diverse data types, federated learning for privacy preservation, and integration with other AI technologies for more comprehensive analytical capabilities.

Related Questions In This Topic

What is Multilingual Analytics? Benefits, Languages, and Use Cases

Multilingual analytics enables business intelligence in multiple languages, breaking down language barriers in data analysis. Learn how multilingual analytics works, which languages are supported, and how businesses use it for regional language insights.

What is Text to SQL? How It Works, Examples, and Tools

Text to SQL converts natural language questions into SQL queries automatically using AI. Learn how text-to-SQL works, see real examples, and discover tools that enable anyone to query databases without SQL knowledge.

What is Predictive Analytics? Methods, Examples, and Business Applications

Predictive analytics uses statistical algorithms and machine learning to forecast future outcomes based on historical data. Learn how predictive modeling works, which methods are used, and how businesses apply it for sales forecasting, risk management, and strategic planning.

What is a Large Language Model (LLM)? Definition, How It Works, and Examples

Large language models (LLMs) are AI systems trained on massive text datasets to understand and generate human-like language. Learn how LLMs like GPT work, which applications they power, and see real examples of LLM-powered conversational AI.

Related Guides From Our Blog

How a Modern Analytics Platform Transforms Business Intelligence

Why faster decision-making, real-time analytics, and AI-driven intelligence separate market leaders from laggards—and how Fire AI closes the gap between data and action.

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

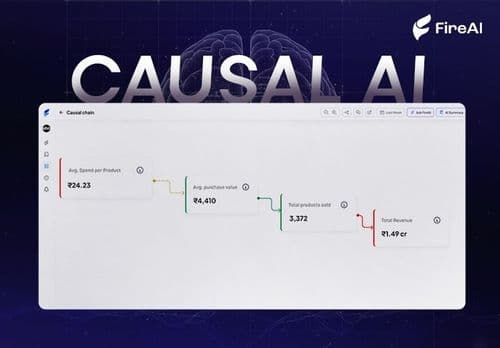

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.