What is Anomaly Detection? Techniques, Examples & How It Works (2026 Guide)

Quick Answer

Anomaly detection is the process of identifying unusual patterns, outliers, or events in data that differ significantly from normal behavior. It uses statistical methods (z-scores, standard deviation) and machine learning algorithms to automatically flag abnormal occurrences. Common applications: fraud detection (unusual transactions), cybersecurity (suspicious login attempts), system monitoring (server failures), quality control (defective products), and health monitoring (patient vitals). Works in real-time or batch processing.

Your credit card company just blocked a fraudulent transaction—seconds after someone tried to use your card in another country. How did they know? Anomaly detection.

An e-commerce site detects a sudden spike in failed payments and immediately investigates a payment gateway issue—before losing millions in sales. Anomaly detection.

A manufacturing plant catches a subtle vibration pattern in machinery that predicts failure 2 weeks before breakdown. You guessed it—anomaly detection.

Anomaly detection is the AI-powered watchdog that never sleeps, continuously monitoring your data for anything unusual that could indicate problems, fraud, or opportunities. It's a key capability of diagnostic analytics systems that help explain why unexpected events occurred.

What is Anomaly Detection?

Anomaly detection is the process of automatically identifying unusual patterns, outliers, or events in data that differ significantly from normal behavior.

Simple Definition: It's like having a security guard who knows what "normal" looks like and immediately alerts you when something seems off.

Real-World Example: If your website typically gets 1,000 visitors per hour, and suddenly drops to 100, anomaly detection would instantly flag this 90% drop and alert you to investigate—potentially catching a server issue before you lose significant business.

These anomalies (also called outliers) represent observations that differ substantially from the majority of data, potentially indicating critical incidents, emerging opportunities, or system malfunctions.

The technology combines statistical methods, machine learning algorithms, and domain expertise to establish baselines of normal behavior and automatically flag deviations. Anomaly detection operates across various data types including time series, transactional data, user behavior, system metrics, and sensor readings.

Core Characteristics

Automated Monitoring: Continuous surveillance of data streams without human intervention.

Pattern Recognition: Identification of deviations from established normal patterns.

Real-Time Processing: Immediate detection and alerting for time-sensitive anomalies.

Scalable Analysis: Ability to handle large volumes of data across multiple dimensions.

Adaptive Learning: Systems that evolve and learn from new data patterns over time.

How Anomaly Detection Works

Data Collection and Preparation

Anomaly detection begins with comprehensive data gathering:

- Time Series Data: Sequential measurements over time (system metrics, sales data, sensor readings)

- Transactional Data: Individual events and transactions (financial transactions, user interactions)

- Behavioral Data: User actions and patterns (website visits, application usage, network traffic)

- Multivariate Data: Multiple related variables analyzed together (system performance indicators)

- Streaming Data: Real-time data flows requiring immediate processing

Baseline Establishment

Systems learn what constitutes normal behavior:

- Statistical Baselines: Mean, standard deviation, and distribution characteristics

- Machine Learning Models: Trained models that capture complex normal patterns

- Historical Analysis: Analysis of past data to establish expected ranges

- Seasonal Adjustments: Accounting for regular patterns and cyclical variations

- Contextual Factors: Incorporating business rules and environmental conditions

Detection Algorithms

Various approaches identify anomalies:

- Statistical Methods: Z-score analysis, control charts, regression-based detection

- Machine Learning: Supervised models for known anomaly types, unsupervised clustering for unknown patterns

- Time Series Analysis: ARIMA models, exponential smoothing, seasonal decomposition

- Distance-Based Methods: Isolation forests, local outlier factor analysis

- Density-Based Methods: DBSCAN clustering, Gaussian mixture models

Alerting and Response

Detected anomalies trigger appropriate actions:

- Severity Scoring: Classification of anomalies by potential impact and urgency

- Automated Alerts: Notifications to relevant stakeholders through multiple channels

- Investigation Support: Contextual information to aid human analysis

- Automated Responses: Pre-defined actions for well-understood anomaly types

- Feedback Loops: Incorporation of human feedback to improve detection accuracy

Types of Anomalies

Point Anomalies

Individual data points that are anomalous:

- Single Value Outliers: One measurement significantly different from others

- Contextual Anomalies: Values normal in one context but anomalous in another

- Collective Anomalies: Individual points that are anomalous only when considered together

Contextual Anomalies

Data points anomalous in specific contexts:

- Time-Based Anomalies: Unusual values at specific times (holiday spikes, after-hours activity)

- Location-Based Anomalies: Geographic deviations from normal patterns

- Behavioral Anomalies: Actions inconsistent with established user or system behavior

- Conditional Anomalies: Values anomalous given specific conditions or combinations

Collective Anomalies

Groups of data points that are anomalous together:

- Sequence Anomalies: Unusual patterns in data sequences or time series

- Group Anomalies: Subsets of data that deviate from group norms

- Cluster Anomalies: Unusual clustering patterns in multivariate data

- Pattern Anomalies: Deviations from expected sequential or structural patterns

Applications Across Industries

Financial Services

Critical for fraud detection and risk management:

- Transaction Monitoring: Real-time detection of fraudulent credit card transactions

- Account Takeover Detection: Identification of unusual login patterns and account activity

- Market Anomaly Detection: Flagging unusual trading patterns and market manipulation

- Insurance Claim Analysis: Detection of fraudulent or unusual insurance claims

- Anti-Money Laundering: Monitoring for suspicious financial transaction patterns

Cybersecurity

Essential for threat detection and response:

- Network Intrusion Detection: Identification of unusual network traffic patterns

- User Behavior Analytics: Detection of compromised accounts through abnormal access patterns

- System Anomaly Detection: Monitoring for unusual system resource usage or performance

- Data Exfiltration Detection: Identification of unusual data access or transfer patterns

- Malware Detection: Recognition of anomalous system behavior indicating infection

Manufacturing and IoT

Critical for operational reliability:

- Equipment Failure Prediction: Early detection of machinery anomalies indicating impending failure

- Quality Control: Identification of defective products through sensor data analysis

- Supply Chain Monitoring: Detection of unusual patterns in logistics and inventory

- Energy Consumption Analysis: Flagging abnormal energy usage patterns

- Production Line Optimization: Detection of process inefficiencies and bottlenecks

Healthcare

Important for patient safety and operational efficiency:

- Vital Sign Monitoring: Detection of abnormal patient vital signs requiring immediate attention

- Medical Device Anomalies: Identification of malfunctioning medical equipment

- Patient Behavior Analysis: Detection of unusual patient activity patterns

- Drug Interaction Alerts: Flagging unusual medication patterns or dosages

- Hospital Resource Utilization: Monitoring for abnormal demand patterns

E-commerce and Retail

Enhances customer experience and prevents fraud:

- Fraudulent Transaction Detection: Real-time identification of suspicious purchase patterns

- Inventory Anomaly Detection: Flagging unusual stock movement or demand patterns

- Customer Behavior Analysis: Detection of unusual shopping or browsing patterns

- Supply Chain Disruptions: Early warning of supplier or logistics issues

- Recommendation Engine Optimization: Identification of unusual user preference patterns

Technical Implementation

Statistical Methods

Traditional approaches for anomaly detection:

- Z-Score Analysis: Measuring standard deviations from the mean

- Modified Z-Score: Robust version handling outliers in baseline calculation

- IQR Method: Interquartile range analysis for outlier detection

- Control Charts: Statistical process control for time series monitoring

- Regression Analysis: Detecting deviations from predicted values

Machine Learning Approaches

Advanced algorithms for complex pattern detection:

- Supervised Learning: Classification models trained on labeled normal/abnormal data

- Unsupervised Learning: Clustering and dimensionality reduction for unknown anomalies

- Semi-Supervised Learning: Combination of labeled and unlabeled data for training

- Deep Learning: Neural networks for complex pattern recognition in high-dimensional data

- Ensemble Methods: Combination of multiple models for improved accuracy

Time Series Analysis

Specialized methods for temporal data:

- ARIMA Models: Autoregressive integrated moving average for forecasting-based detection

- Exponential Smoothing: Weighted averaging for trend and seasonal anomaly detection

- Prophet: Facebook's forecasting model for time series anomaly detection

- LSTM Networks: Long short-term memory networks for sequence anomaly detection

- Seasonal Decomposition: Separating trend, seasonal, and residual components for analysis

Implementation Frameworks

Technology platforms for anomaly detection:

- Real-Time Streaming: Apache Kafka, Apache Flink for continuous data processing

- Machine Learning Platforms: TensorFlow, PyTorch, scikit-learn for model development

- Cloud Services: AWS SageMaker, Google Cloud AI Platform, Azure Machine Learning

- Specialized Tools: Dedicated anomaly detection platforms and APIs

- Integrated Solutions: Built-in capabilities in business intelligence platforms

Challenges and Solutions

Challenge: False Positives

Problem: Normal variations incorrectly flagged as anomalies.

Solution:

- Implement multi-level thresholding based on severity and impact

- Use contextual information to reduce false alarms

- Apply machine learning to learn from feedback and reduce false positives

- Establish clear definitions of what constitutes an actionable anomaly

- Implement alert fatigue prevention through intelligent filtering

Challenge: Concept Drift

Problem: Normal behavior changes over time, making historical baselines obsolete.

Solution:

- Implement adaptive models that continuously update baselines

- Use online learning techniques for real-time model adaptation

- Establish feedback loops for model recalibration

- Monitor model performance and trigger retraining when needed

- Use ensemble methods combining multiple time windows

Challenge: High-Dimensional Data

Problem: Complex datasets with many variables make anomaly detection difficult.

Solution:

- Apply dimensionality reduction techniques (PCA, t-SNE)

- Use feature selection to focus on most relevant variables

- Implement multivariate anomaly detection methods

- Leverage deep learning for automatic feature extraction

- Use domain expertise to identify key indicators

Challenge: Imbalanced Data

Problem: Anomalies are rare, making it difficult to train accurate models.

Solution:

- Use anomaly detection algorithms designed for imbalanced data

- Implement synthetic anomaly generation for training

- Apply cost-sensitive learning with different misclassification penalties

- Use ensemble methods combining multiple detection approaches

- Implement unsupervised methods that don't require labeled anomalies

Challenge: Real-Time Processing

Problem: Detecting anomalies in streaming data requires fast processing.

Solution:

- Use streaming analytics platforms for real-time processing

- Implement incremental learning for continuous model updates

- Apply approximation algorithms for faster processing

- Use distributed computing for scalability

- Implement multi-stage filtering to reduce computational load

Best Practices

Define Clear Objectives

Establish specific anomaly detection goals:

- Identify the types of anomalies most important to detect

- Define acceptable false positive and false negative rates

- Establish response procedures for different anomaly types

- Set performance metrics and success criteria

- Align detection capabilities with business impact

Choose Appropriate Methods

Select detection approaches based on use case:

- Use statistical methods for well-understood, stable processes

- Apply machine learning for complex, evolving patterns

- Combine multiple methods for comprehensive coverage

- Consider computational requirements and real-time needs

- Validate methods against historical data before deployment

Implement Robust Monitoring

Establish comprehensive monitoring frameworks:

- Track detection accuracy and false positive rates

- Monitor system performance and latency

- Implement automated model validation and updates

- Establish feedback mechanisms for continuous improvement

- Create audit trails for compliance and debugging

Ensure Scalability

Design systems that can handle growing data volumes:

- Use distributed computing platforms for scalability

- Implement efficient algorithms and data structures

- Plan for elastic resource allocation

- Design modular architectures for component updates

- Establish data sampling strategies for large datasets

Focus on Actionable Insights

Ensure anomalies drive meaningful actions:

- Classify anomalies by business impact and urgency

- Provide contextual information for investigation

- Suggest specific response actions and procedures

- Integrate with workflow and alerting systems

- Measure the effectiveness of anomaly-driven interventions

The Future of Anomaly Detection

AI-Enhanced Detection

Artificial intelligence will advance anomaly detection capabilities:

- Deep Learning Models: More sophisticated pattern recognition in complex data

- Automated Feature Engineering: AI-driven identification of relevant indicators

- Explainable AI: Clear explanations of why anomalies were detected

- Adaptive Learning: Systems that automatically adjust to changing environments

- Multi-Modal Analysis: Integration of text, images, and structured data

Real-Time and Streaming Analytics

Immediate anomaly detection will become standard:

- Edge Computing: Local anomaly detection on IoT devices

- 5G-Enabled Analytics: Ultra-low latency detection and response

- Streaming ML: Continuous model updates with streaming data

- Event-Driven Architectures: Instant response to detected anomalies

- Predictive Maintenance: Early warning systems for equipment and systems

Integrated Security and Operations

Anomaly detection will become part of comprehensive platforms:

- Unified Observability: Combined monitoring of systems, security, and business metrics

- Automated Response: AI-driven remediation of detected anomalies

- Threat Intelligence: Integration with global threat databases

- Business Context: Anomaly detection informed by business rules and objectives

- Collaborative Investigation: Shared workspaces for anomaly analysis and response

Anomaly detection transforms reactive monitoring into proactive intelligence, enabling organizations to identify and respond to unusual patterns before they become critical issues or missed opportunities. By automatically surfacing deviations from normal behavior, these systems provide early warning capabilities that are essential for maintaining operational reliability, security, and competitive advantage.

Platforms like FireAI enhance anomaly detection through advanced machine learning algorithms, real-time processing capabilities, and integrated alerting systems that make anomaly identification and response more efficient and effective.

Explore FireAI Workflows

Jump from the concept on this page into the product features and solution paths most relevant to it.

AI Analytics

Guides on natural language querying, AI-powered analytics, forecasting, anomaly detection, and automated insights.

Ready to Transform Your Business Data?

Experience the power of AI-powered business intelligence. Ask questions, get insights, make better decisions.

Frequently Asked Questions

Anomaly detection identifies data points, patterns, or events that deviate significantly from normal behavior. Using statistical methods and machine learning algorithms, it automatically flags unusual occurrences in data streams, enabling early detection of fraud, system failures, security threats, and emerging business opportunities.

Anomaly detection is important because it enables early identification of problems before they become critical, supports proactive decision-making rather than reactive responses, helps prevent fraud and security breaches, identifies emerging opportunities, and automates monitoring of complex systems and processes that would be difficult to track manually.

Types include point anomalies (single unusual data points), contextual anomalies (values unusual in specific contexts like time or location), and collective anomalies (groups of data points that are anomalous together). Each type requires different detection approaches and has different business implications.

Common applications include fraud detection in financial transactions, intrusion detection in cybersecurity, equipment failure prediction in manufacturing, vital sign monitoring in healthcare, network traffic analysis for IT operations, quality control in production processes, and user behavior analysis for personalized services.

Anomaly detection works by establishing baselines of normal behavior using statistical methods or machine learning models, continuously monitoring new data against these baselines, calculating deviation scores or probabilities, and flagging observations that exceed threshold values. Methods include statistical analysis, clustering algorithms, and deep learning approaches.

Challenges include high false positive rates from normal variations being flagged as anomalies, concept drift where normal behavior changes over time, difficulty with high-dimensional data, imbalanced datasets where anomalies are rare, and the need for real-time processing in streaming data environments.

Yes, modern anomaly detection can be highly automated using machine learning algorithms that continuously learn from data, statistical methods that establish automatic thresholds, and real-time processing systems that monitor data streams. However, human oversight is still valuable for complex cases and model validation.

Tools include specialized platforms like Splunk for IT monitoring, Darktrace for cybersecurity, specialized ML libraries like scikit-learn and TensorFlow, streaming platforms like Apache Kafka and Flink, and integrated solutions in business intelligence platforms. Cloud services from AWS, Azure, and Google also provide anomaly detection capabilities.

Performance is evaluated using metrics like precision (accuracy of positive detections), recall (ability to find all anomalies), F1-score (balance of precision and recall), false positive rate, and business impact measures. Evaluation considers both statistical accuracy and practical usefulness in real-world applications.

The future includes AI-enhanced detection with deep learning models and explainable AI, real-time streaming analytics with edge computing, integrated security and operations platforms, automated response systems, and multi-modal analysis combining different data types. These advances will make anomaly detection more accurate, faster, and more integrated into business processes.

Related Questions In This Topic

What is Augmented Analytics? Definition, Benefits, and Examples

Augmented analytics uses AI and machine learning to automate data preparation, insight discovery, and natural language generation. Learn how augmented analytics works, which benefits it provides, and see real examples of automated insights.

What is Predictive Analytics? Methods, Examples, and Business Applications

Predictive analytics uses statistical algorithms and machine learning to forecast future outcomes based on historical data. Learn how predictive modeling works, which methods are used, and how businesses apply it for sales forecasting, risk management, and strategic planning.

What is Prescriptive Analytics? Examples, Benefits, and Use Cases

Prescriptive analytics uses AI and optimization algorithms to recommend specific actions for optimal results. Learn how prescriptive analytics works, which techniques it uses, and how businesses apply it for decision automation and optimization.

What is AI-Powered Business Intelligence? Features, Benefits, and Use Cases

AI-powered business intelligence integrates AI and machine learning with traditional BI to automate insights, enable natural language queries, and provide predictive analytics. Learn how AI BI works, which features matter, and how businesses use it.

Related Guides From Our Blog

Democratizing Data: How AI Analytics Levels the Playing Field for Small Businesses and Freelancers

For decades, data-driven decision making was a luxury that only enterprises could afford. Big companies hired data scientists, purchased expensive BI tools, and built complex data warehouses. In exchange, they received precise insights that guided budgets, strategy, and growth.

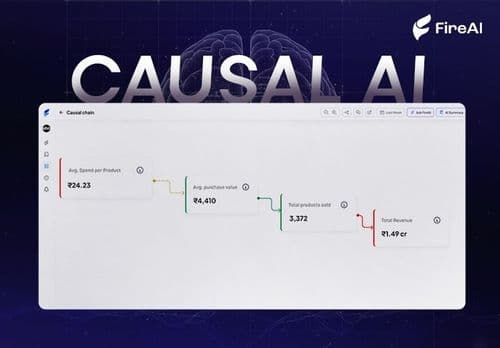

Causal AI Explained: Uncovering the “Why” in Data with Machine Learning

Causal AI reveals not just what will happen, but why — and exactly what changes if you act differently. It turns predictions into high-ROI decisions by uncovering true cause-and-effect in your data.

From Gut Feel to Data-Driven: A Marketer’s Guide to Embracing AI Insights

A practical guide for modern marketers on shifting from instinct-driven decisions to AI-powered, data-driven insights with real examples of how tools like FireAI make analytics conversational and actionable.